Training Scenario

For detailed analysis, we visualize a representative successful episode (Episode 20) sampled from the training process. The episode illustrates the learned policy’s nominal behavior under training conditions.

Rock capturing with standard excavator buckets is a challenging task that typically requires skilled human operators. Unlike soil digging, it involves manipulating large, irregular rocks in unstructured environments, where complex contact interactions with granular material make model-based control impractical. Existing autonomous excavation approaches mainly target continuous media or rely on specialized grippers, limiting their applicability in real construction settings. This paper presents a fully data-driven control framework for rock capturing that avoids explicit modeling of rock or soil properties. A model-free reinforcement learning agent is trained in the AGX Dynamics® simulator using Proximal Policy Optimization (PPO) and a guiding reward formulation. The learned policy outputs joint velocity commands directly to the boom, arm, and bucket of a CAT®365 excavator model. Robustness is improved through extensive domain randomization of rock properties and initial configurations. Results show generalization to unseen rocks and soil conditions, achieving high success rates while maintaining machine stability.

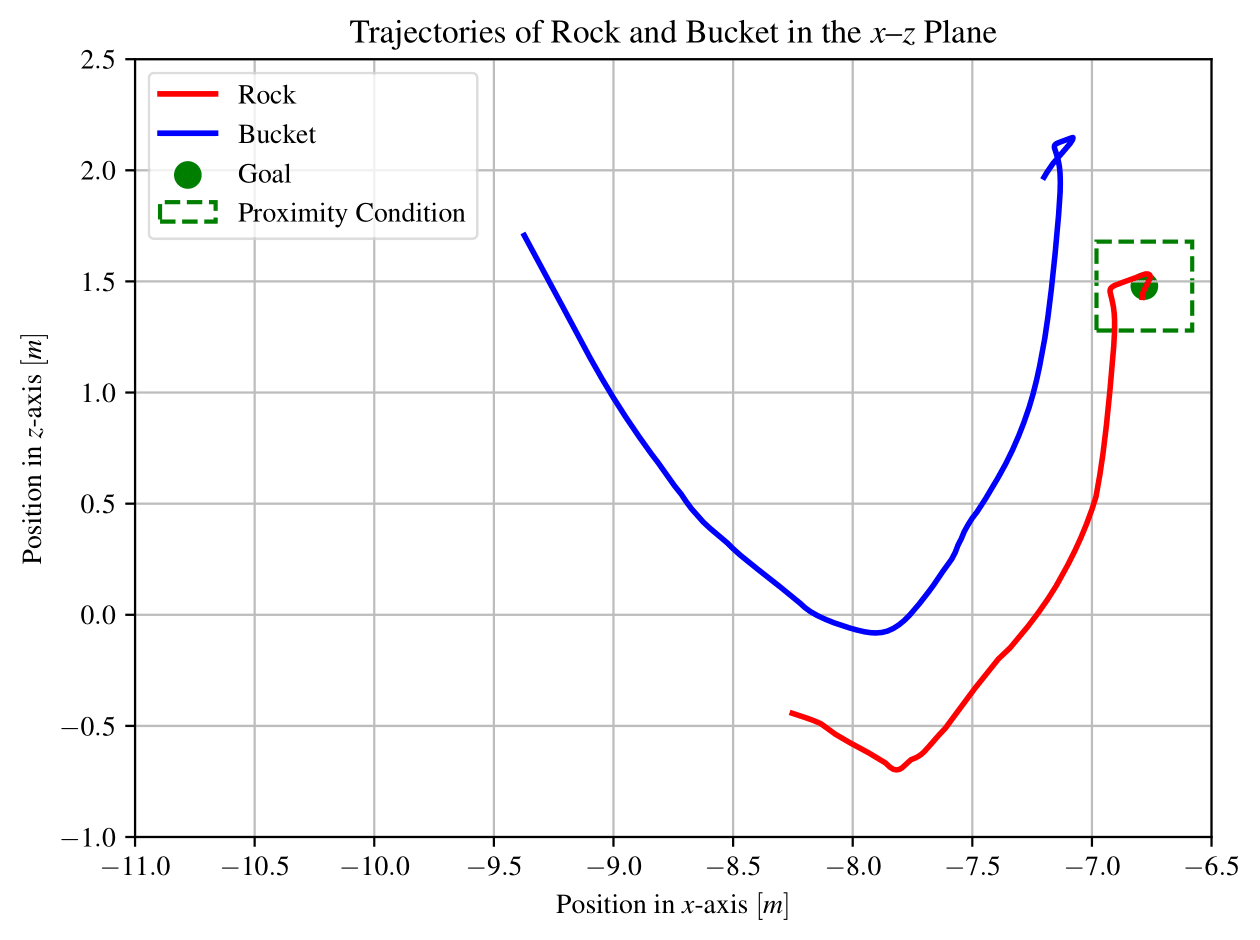

Scenario A. Training Conditions

Success rate: 79%

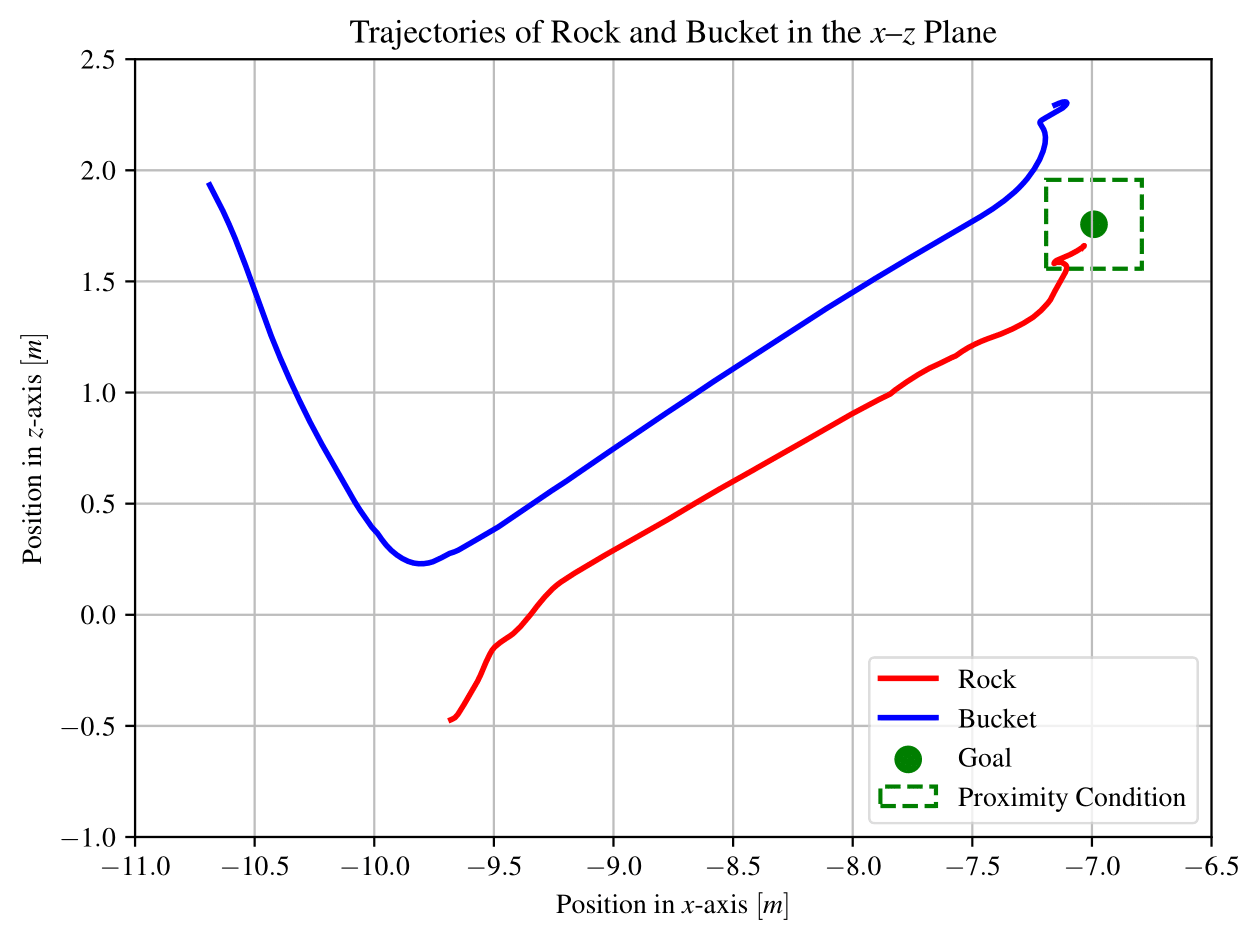

Scenario B. Unseen Rock Geometries

Success rate: 77%

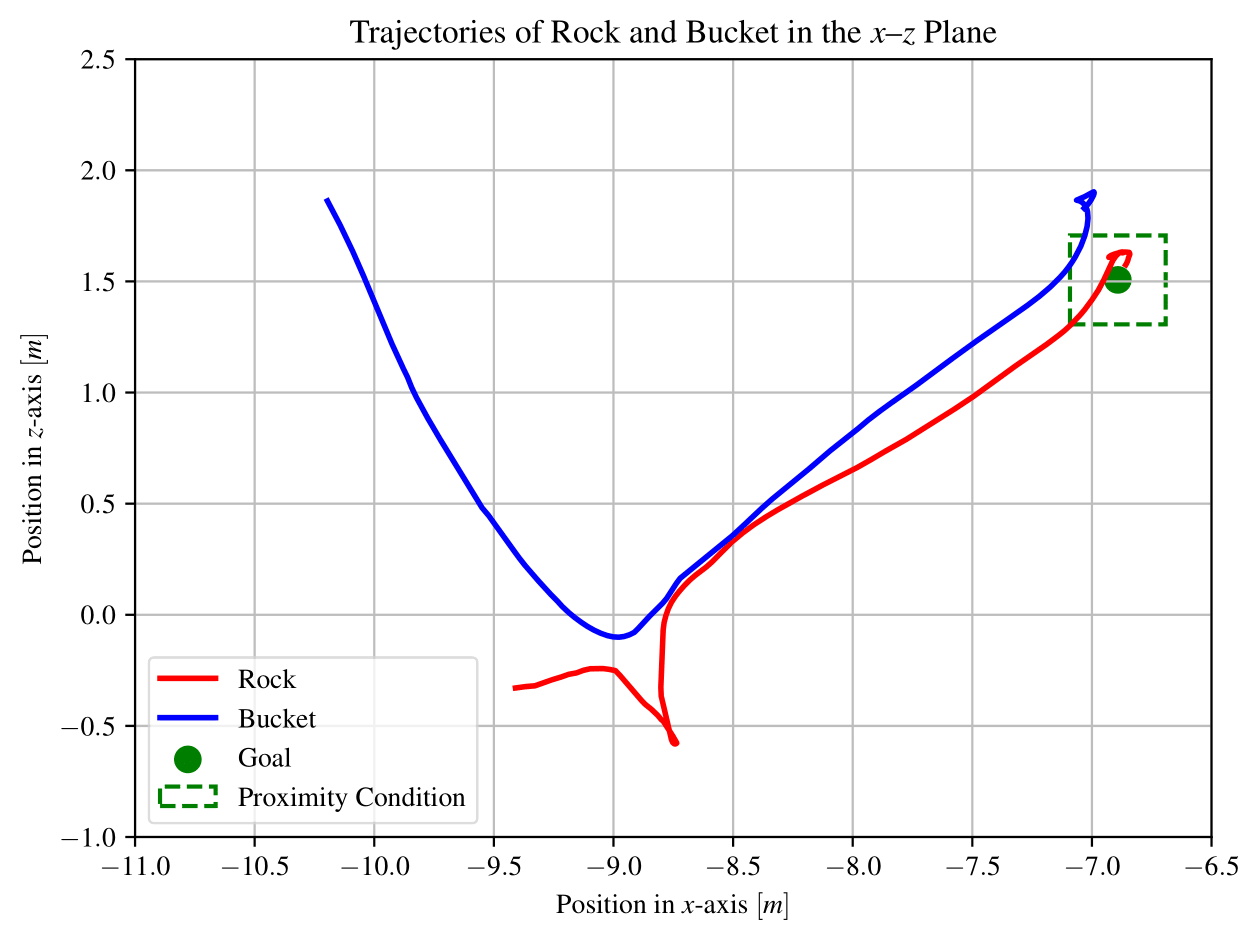

Scenario C. Unseen Material Properties

Success rate: 67%

For detailed analysis, we visualize a representative successful episode (Episode 20) sampled from the training process. The episode illustrates the learned policy’s nominal behavior under training conditions.

This figure shows the trajectories of the rock and the bucket in the x–z plane. The rock is successfully guided to the goal region and remains within the defined proximity threshold.

The learned policy is evaluated on previously unseen rock geometries. Episode 5 is shown as a representative successful rollout, demonstrating generalization beyond the training geometry distribution.

The trajectories of the rock and the bucket are illustrated. Despite the unseen rock geometry, the rock is successfully guided and remains within the defined proximity threshold.

This scenario evaluates the robustness of the learned policy under unobserved material properties. Episode 10 is selected as a representative successful rollout with altered material characteristics.

The trajectories in the x–z plane show successful task execution under unseen material properties, with the rock reaching and maintaining proximity to the target region.

@misc{molaei2025learningcapturerocksusing,

title={Learning to Capture Rocks using an Excavator: A Reinforcement Learning Approach with Guiding Reward Formulation},

author={Amirmasoud Molaei and Mohammad Heravi and Reza Ghabcheloo},

year={2025},

eprint={2510.04168},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2510.04168},

}